About the chair

The Chair of Computational Mathematics of Fundación Deusto at University of Deusto, Bilbao (Basque Country, Spain) aims to develop an active research, training and outreach agenda in various aspects of Applied Mathematics. The Chair is committed with the development of ground-breaking research in the areas of Partial Differential Equations, Control Theory, Numerical Analysis and Scientific Computing; key tools for technological transfer and for the interaction of Mathematics with other scientific disciplines such as Biology, Engineering, Earth and Climate Sciences.

Enrique Zuazua

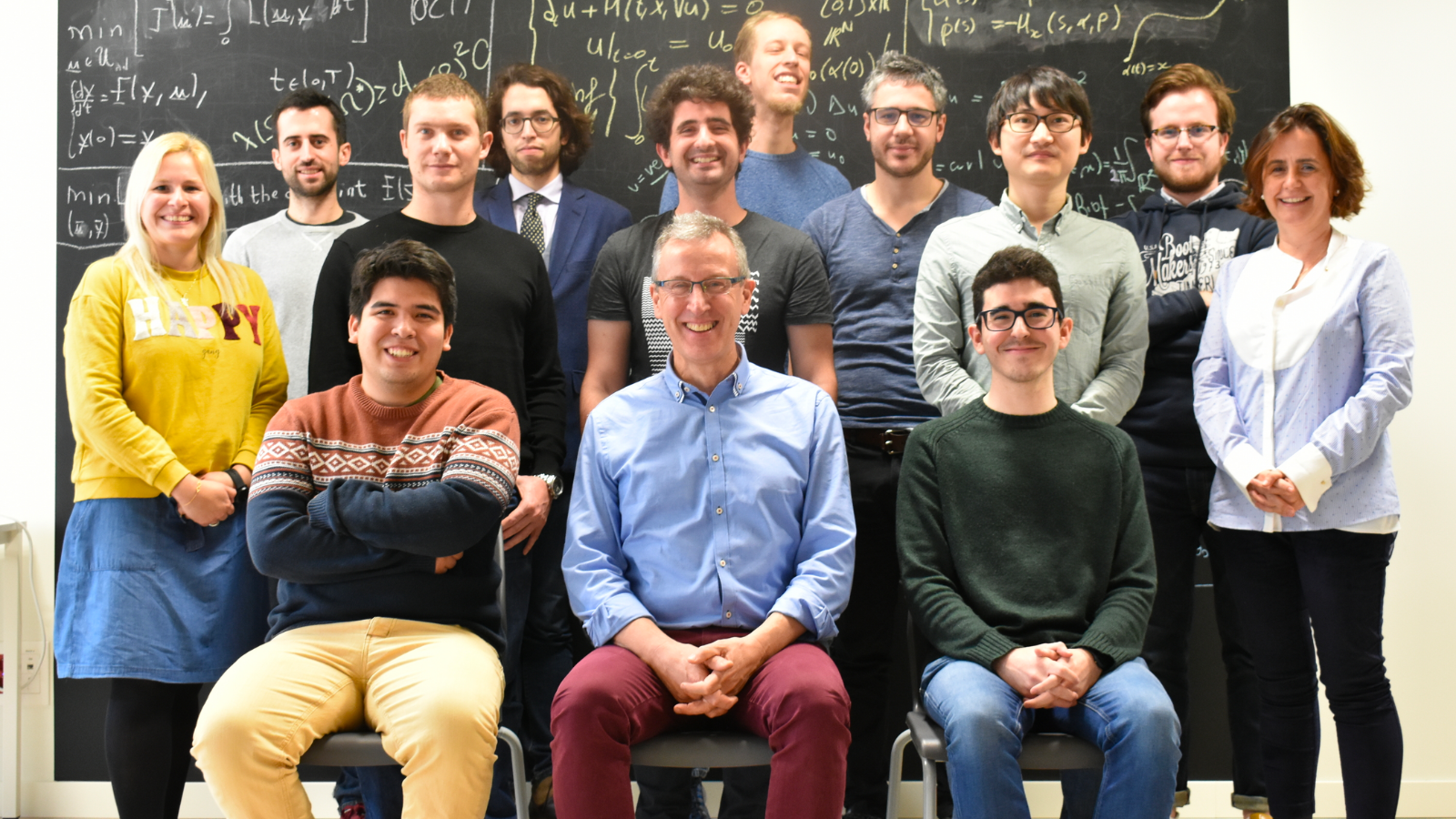

Enrique Zuazua (Eibar, Basque Country – Spain, 1961) holds an Alexander von Humboldt Professorship at the Friedrich–Alexander University (FAU), Erlangen (Germany). He is also the Director of the Chair of Computational Mathematics at Deusto Foundation, Universidad de Deusto (Bilbao, Basque Country-Spain) where he led the research team funded by the ERC Advanced Grant DyCoN project (2016-2022). He is also a Professor of Applied Mathematics since 2001 at the Department of Mathematics of the Autonomous University of Madrid where he holds a Strategic Chair.

ERC DyCon project

DyCon: Dynamic Control is an European project funded by the European Research Council – ERC (2016 – 2022), focused at making a breakthrough contribution in the broad area of Control of Partial Differential Equations (PDE) and their numerical approximation methods by addressing key unsolved issues appearing systematically in real-life applications. The field of PDEs, together with numerical approximation, simulation methods and control theory, has evolved significantly to address the industrial demands.

Our latest!

Control of neural transport for normalizing flows

A Two-Stage Numerical Approach for the Sparse Initial Source Identification of a Diffusion-Advection Equation

Gaussian Beam ansatz for finite difference wave equations

Long-time convergence of a nonlocal Burgers’ equation towards the local N-wave

Optimal design of sensors via geometric criteria

Spectral inequalities for pseudo-differential operators and control theory on compact manifolds

FAU DCN-AvH Seminar: Natural gradient in evolutionary games

FAU MoD Lecture: Learning-Based Optimization and PDE Control in User-Assignable Finite Time

CCM double-seminar: “Optimal control of mixed local-nonlocal parabolic PDE” and “Nonlinear Feedback Control Design for Fluid Mixing”

Enrique Zuazua: 2022 W.T. and Idalia Reid Prize by SIAM

DeustoCCMSeminar: Null controllability of a nonlinear age, space and two-sex structured population dynamics model

|| Looking for our DyCon blog posts?